Terminal-Bench

A benchmark for AI agents on ~100 terminal tasks.

Open-source codebases, datasets and benchmarks we've released, survey write-ups, and LaTeX templates I've built for my own papers, posters, and slides.

A benchmark for AI agents on ~100 terminal tasks.

Zero-shot visual object tracking with motion-aware memory, built on SAM.

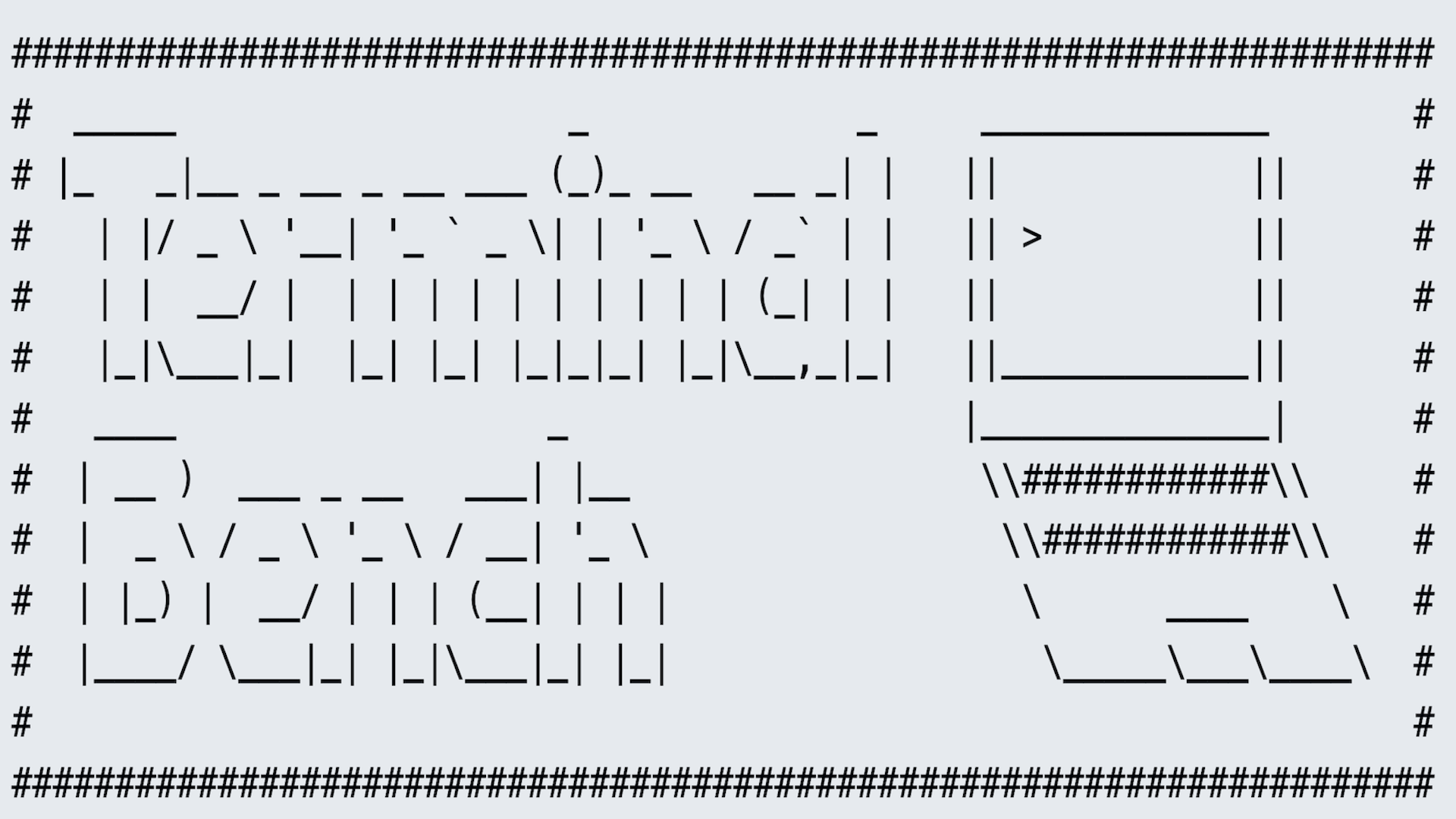

Evaluation suite accelerating the development of large multimodal models.

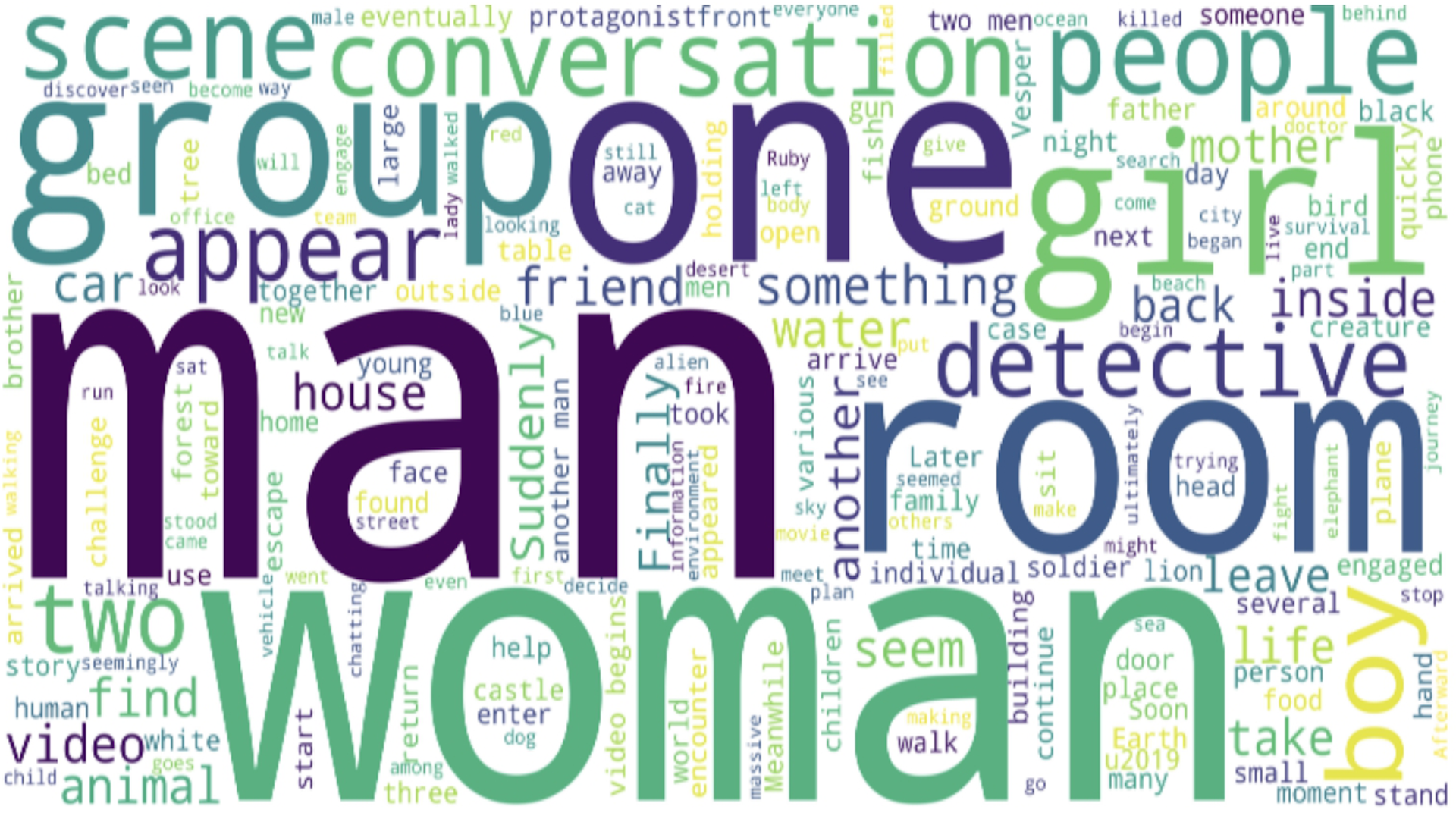

Large multimodal models for long-form video understanding with memory mechanism.

Text-driven, consistency-aware diffusion video editing.

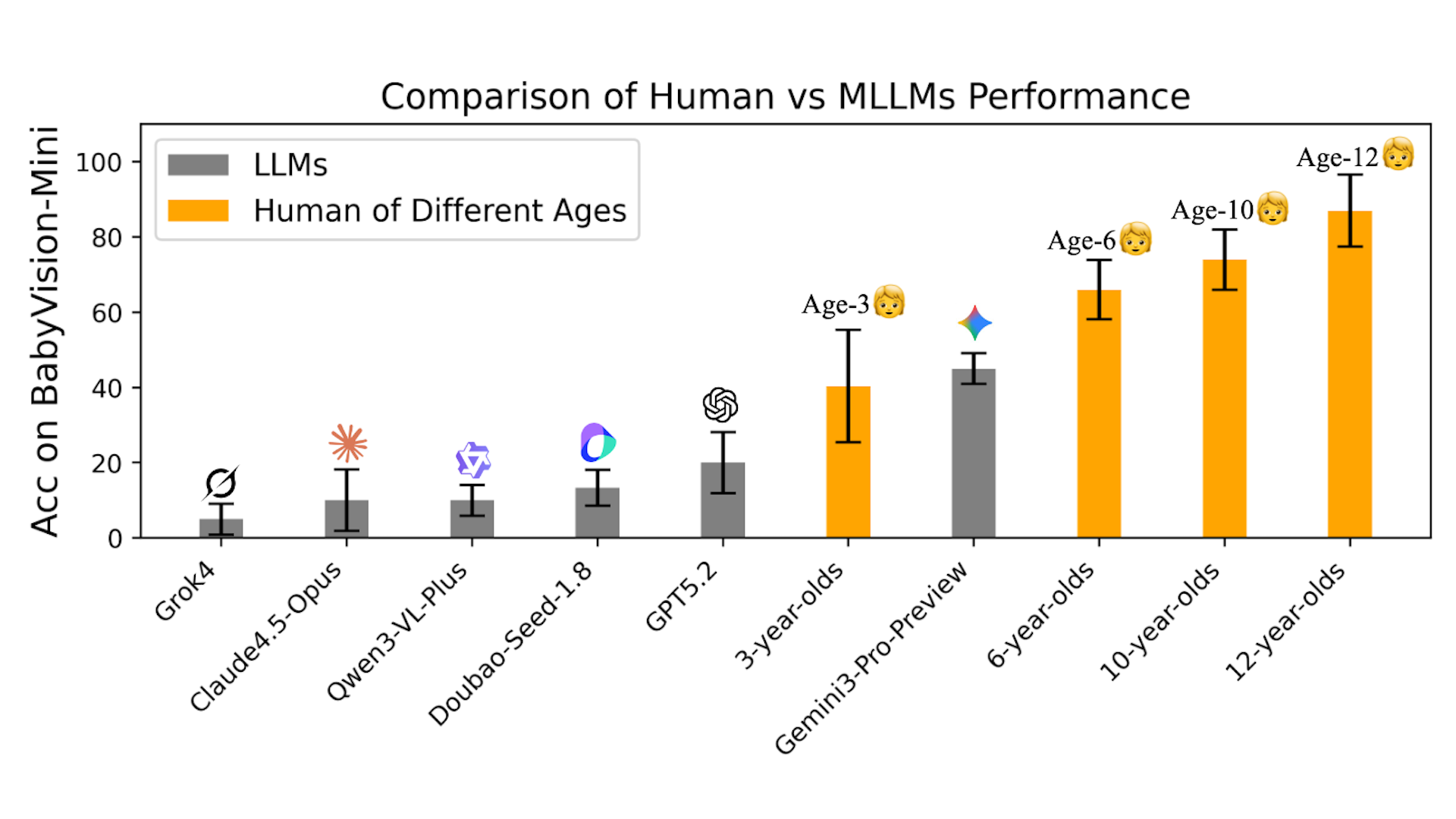

A benchmark for visual reasoning that evaluates fundamental visual skills independent of language shortcuts.

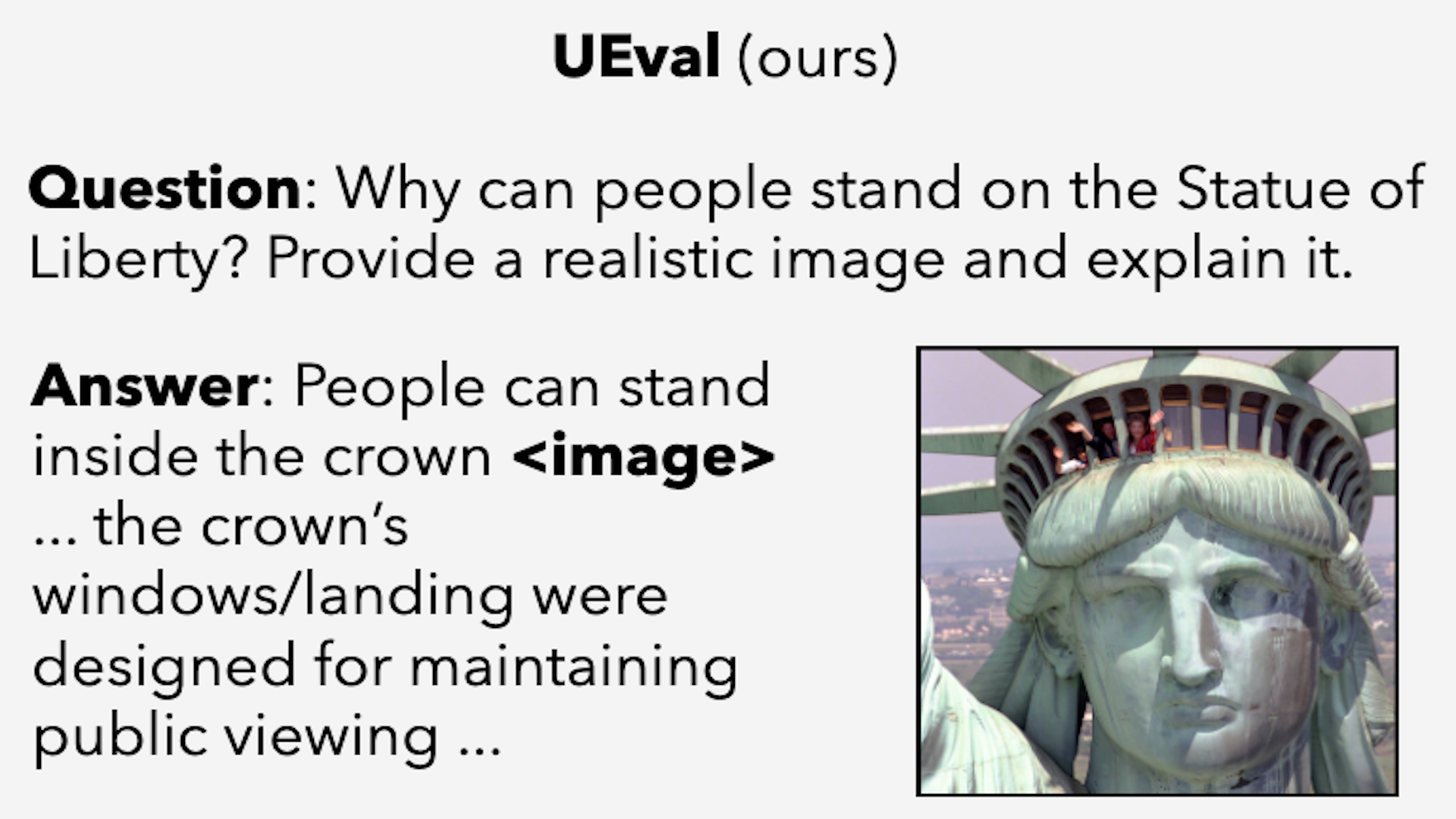

A 1,000-example benchmark for evaluating models that generate both images and text with rubric-based scoring.

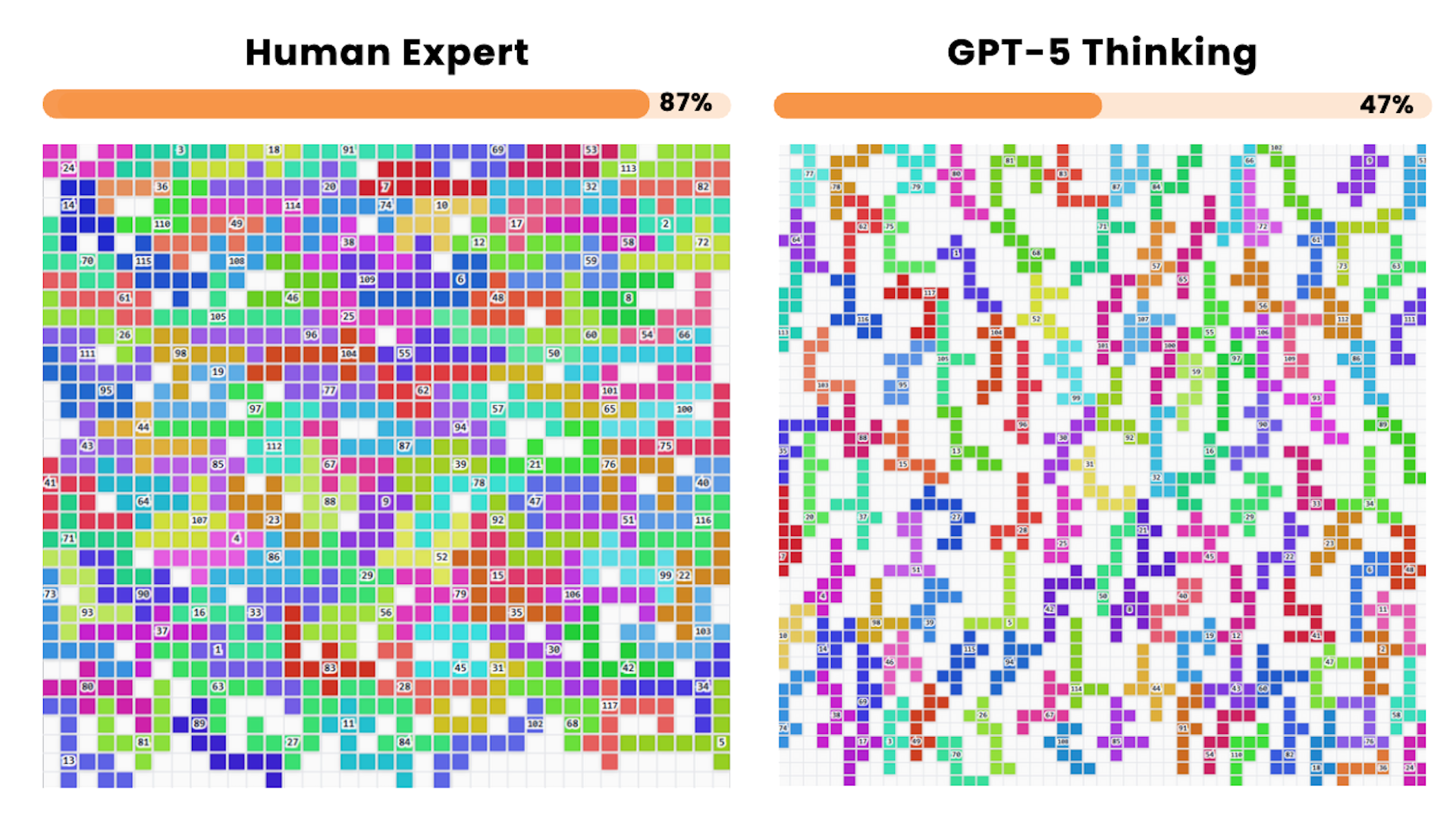

An open-ended benchmark for challenging computer science problems with objective, fine-grained evaluation.

First benchmark dedicated to Dense Video Understanding, focusing on QA-driven high-frame-rate comprehension.

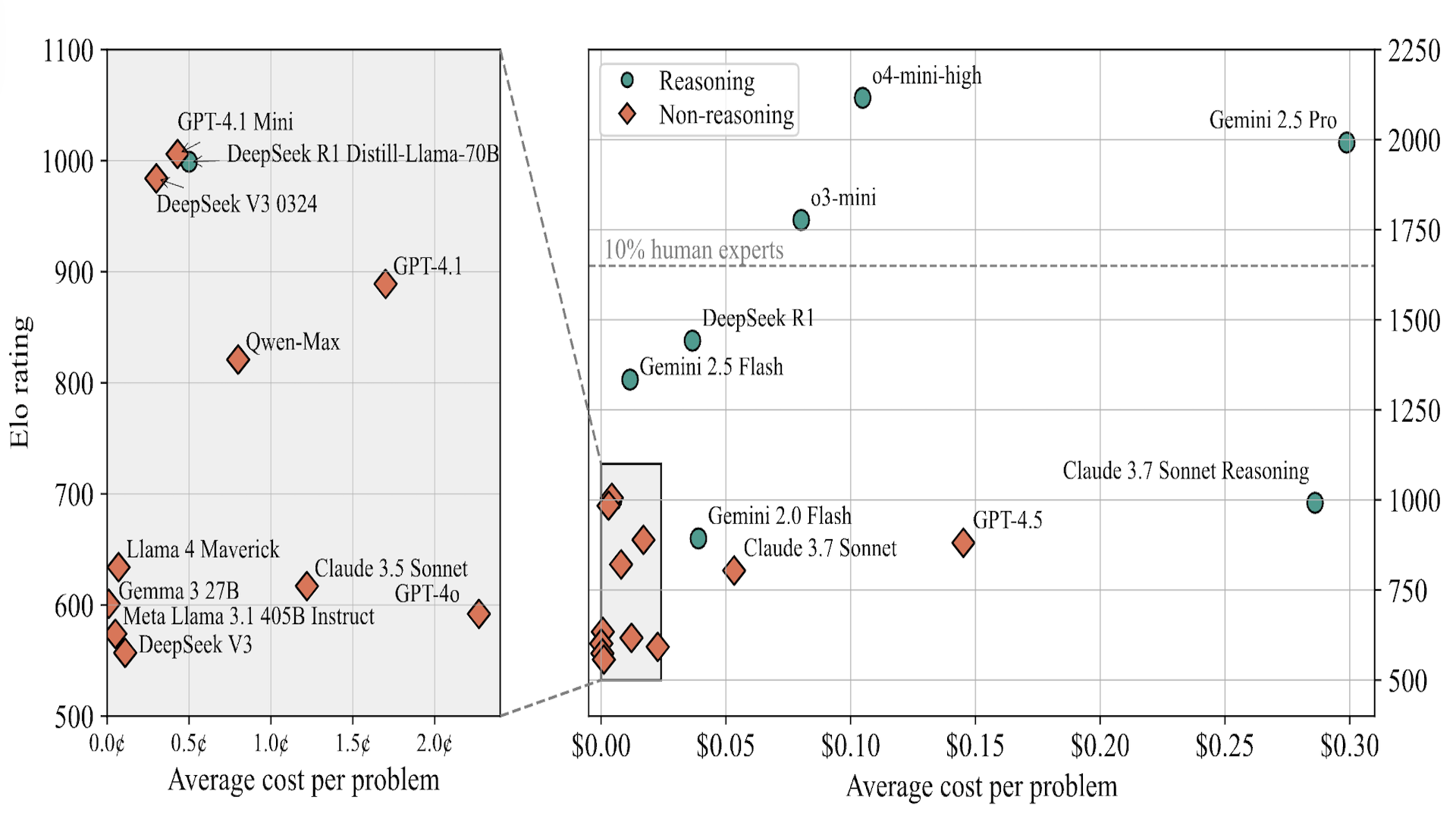

Models like o3-high, o4-mini, and Gemini 2.5 Pro score 0% on hard competitive programming problems.

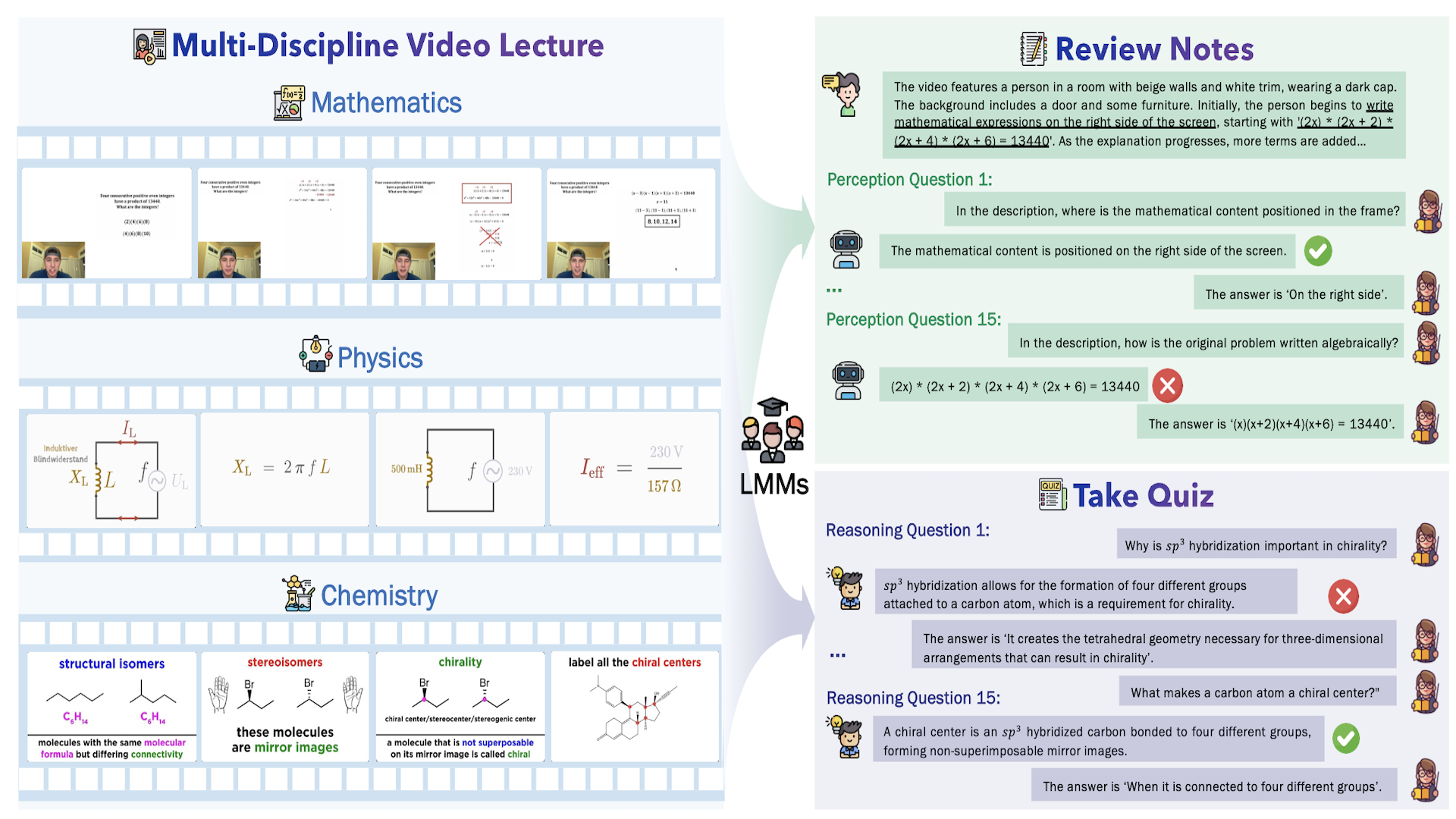

A massive benchmark designed to evaluate LMMs in understanding Multi-Discipline Lectures.

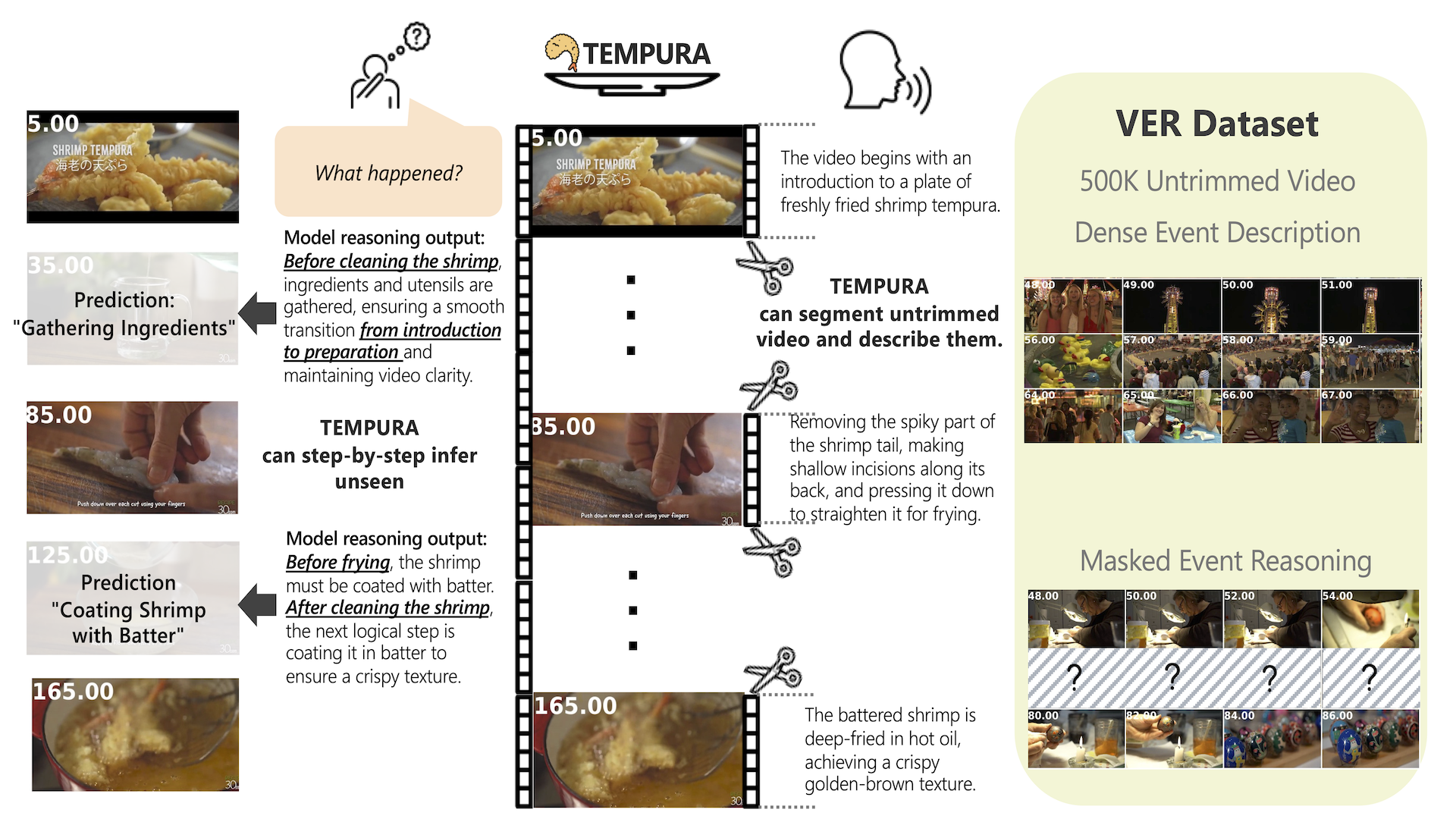

1M reasoning samples about causal event relationships with fine-grained, timestamped descriptions of untrimmed videos.

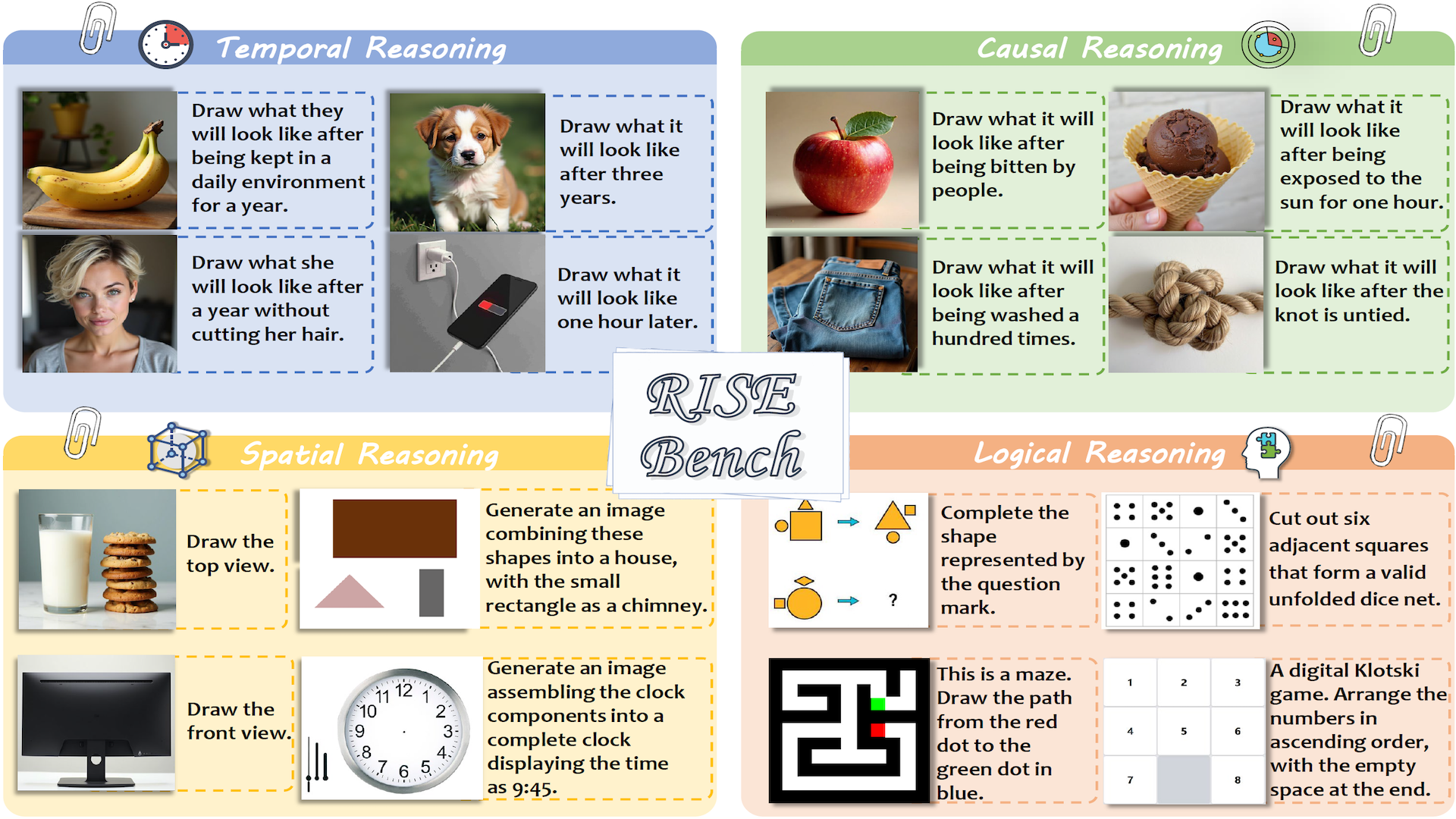

First benchmark for reasoning-informed visual editing across four reasoning types: temporal, causal, spatial, logical.

Over 20k image pairs for training a language-guided reward model for text-to-image alignment with scientific knowledge.

First benchmark for detailed video captioning — 1k+ videos with significantly longer captions plus training recipes.

Human pose estimation dataset with calibrated radar ADC data, 4D radar tensors, stereo RGB images, and LiDAR.

Manually labeled long-video QA and caption dataset — 1,000 videos, each longer than ten thousand frames.